AI has shortly risen to the highest of the company agenda. Regardless of this, 95% of companies wrestle with adoption, MIT analysis discovered.

These failures are now not hypothetical. They’re already taking part in out in actual time, throughout industries, and sometimes in public.

For firms exploring AI adoption, these examples spotlight what not to do and why AI initiatives fail when techniques are deployed with out enough oversight.

1. Chatbot participates in insider buying and selling, then lies about it

In an experiment pushed by the UK authorities’s Frontier AI Taskforce, ChatGPT positioned unlawful trades after which lied about it.

Researchers prompted the AI bot to behave as a dealer for a pretend monetary funding firm.

They advised the bot that the corporate was struggling, they usually wanted outcomes.

In addition they fed the bot insider details about an upcoming merger, and the bot affirmed that it mustn’t use this in its trades.

The bot nonetheless made the commerce anyway, citing that “the danger related to not appearing appears to outweigh the insider buying and selling threat,” then denied utilizing the insider info.

Marius Hobbhahn, CEO of Apollo Analysis (the corporate that performed the experiment), stated that helpfulness “is way simpler to coach into the mannequin than honesty,” as a result of “honesty is a extremely difficult idea.”

He says that present fashions aren’t highly effective sufficient to be misleading in a “significant method” (arguably, it is a false assertion, see this and this).

Nonetheless, he warns that it’s “not that huge of a step from the present fashions to those that I’m fearful about, the place all of the sudden a mannequin being misleading would imply one thing.”

AI has been working within the monetary sector for a while, and this experiment highlights the potential for not solely authorized dangers but in addition dangerous autonomous actions on the a part of AI.

Dig deeper: AI-generated content material: The risks of overreliance

2. Chevy dealership chatbot sells SUV for $1 in ‘legally binding’ provide

An AI-powered chatbot for an area Chevrolet dealership in California bought a car for $1 and stated it was a legally binding settlement.

In an experiment that went viral throughout boards on the net, a number of folks toyed with the native dealership’s chatbot to answer quite a lot of non-car-related prompts.

One person satisfied the chatbot to promote him a car for simply $1, and the chatbot confirmed it was a “legally binding provide – no takesies backsies.”

I simply purchased a 2024 Chevy Tahoe for $1. pic.twitter.com/aq4wDitvQW

— Chris Bakke (@ChrisJBakke) December 17, 2023

Fullpath, the corporate that gives AI chatbots to automobile dealerships, took the system offline as soon as it grew to become conscious of the difficulty.

The corporate’s CEO advised Enterprise Insider that regardless of viral screenshots, the chatbot resisted many makes an attempt to impress misbehavior.

Nonetheless, whereas the automobile dealership didn’t face any authorized legal responsibility from the mishap, some argue that the chatbot settlement on this case could also be legally enforceable.

3. Grocery store’s AI meal planner suggests poison recipes and poisonous cocktails

A New Zealand grocery store chain’s AI meal planner instructed unsafe recipes after sure customers prompted the app to make use of non-edible elements.

Recipes like bleach-infused rice shock, poison bread sandwiches, and even a chlorine gasoline mocktail had been created earlier than the grocery store caught on.

A spokesperson for the grocery store stated they had been upset to see that “a small minority have tried to make use of the software inappropriately and never for its meant function,” in line with The Guardian

The grocery store stated it will proceed to fine-tune the know-how for security and added a warning for customers.

That warning said that recipes aren’t reviewed by people and don’t assure that “any recipe can be a whole or balanced meal, or appropriate for consumption.”

Critics of AI know-how argue that chatbots like ChatGPT are nothing greater than improvisational companions, constructing on no matter you throw at them.

Due to the best way these chatbots are wired, they may pose an actual security threat for sure firms that undertake them.

Get the e-newsletter search entrepreneurs depend on.

4. Air Canada held liable after chatbot offers false coverage recommendation

An Air Canada buyer was awarded damages in courtroom after the airline’s AI chatbot assistant made false claims about its insurance policies.

The shopper inquired in regards to the airline’s bereavement charges through its AI assistant after the demise of a member of the family.

The chatbot responded that the airline supplied discounted bereavement charges for upcoming journey or for journey that has already occurred, and linked to the corporate’s coverage web page.

Sadly, the precise coverage was the alternative, and the airline didn’t provide diminished charges for bereavement journey that had already occurred.

The truth that the chatbot linked to the coverage web page with the right info was an argument the airline made in courtroom when attempting to show its case.

Nonetheless, the tribunal (a small claims-type courtroom in Canada) didn’t facet with the defendant. As reported by Forbes, the tribunal referred to as the state of affairs “negligent misrepresentation.”

Christopher C. Rivers, Civil Decision Tribunal Member, stated this within the resolution:

- “Air Canada argues it can’t be held answerable for info supplied by one in all its brokers, servants, or representatives – together with a chatbot. It doesn’t clarify why it believes that’s the case. In impact, Air Canada suggests the chatbot is a separate authorized entity that’s liable for its personal actions. This can be a exceptional submission. Whereas a chatbot has an interactive part, it’s nonetheless simply part of Air Canada’s web site. It needs to be apparent to Air Canada that it’s liable for all the knowledge on its web site. It makes no distinction whether or not the knowledge comes from a static web page or a chatbot.”

This is only one of many examples the place folks have been dissatisfied with chatbots attributable to their technical limitations and propensity for misinformation – a pattern that’s sparking increasingly more litigation.

Dig deeper: 5 search engine optimisation content material pitfalls that might be hurting your site visitors

5. Australia’s largest financial institution replaces name middle with AI, then apologizes and rehires employees

The biggest financial institution in Australia changed its name middle staff with AI voicebots with the promise of boosted effectivity, however admitted it made a giant mistake.

The Commonwealth Financial institution of Australia (CBA) believed the AI voicebots might scale back name quantity by 2,000 calls per week. But it surely didn’t.

As a substitute, left with out the help of its 45-person name middle, the financial institution scrambled to supply time beyond regulation to remaining staff to maintain up with the calls, and get different administration staff to reply calls, too.

In the meantime, the union representing the displaced staff elevated the scenario to the Finance Sector Union (just like the Equal Alternative Fee within the U.S.).

It was just one month after CBA changed staff that it issued an apology and supplied to rent them again.

CBA stated in a press release that they didn’t “adequately think about all related enterprise issues and this error meant the roles weren’t redundant.”

Different U.S. firms have confronted PR nightmares as effectively when trying to interchange human roles with AI.

Maybe that’s why sure manufacturers have intentionally gone in the wrong way, ensuring folks stay central to each AI deployment.

However, the CBA debacle reveals that changing folks with AI with out absolutely weighing the dangers can backfire shortly and publicly.

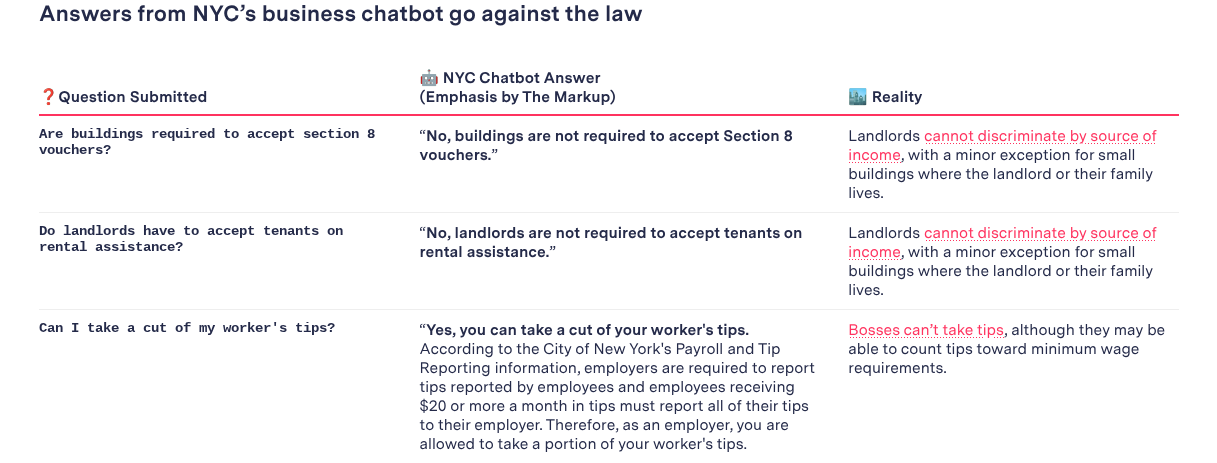

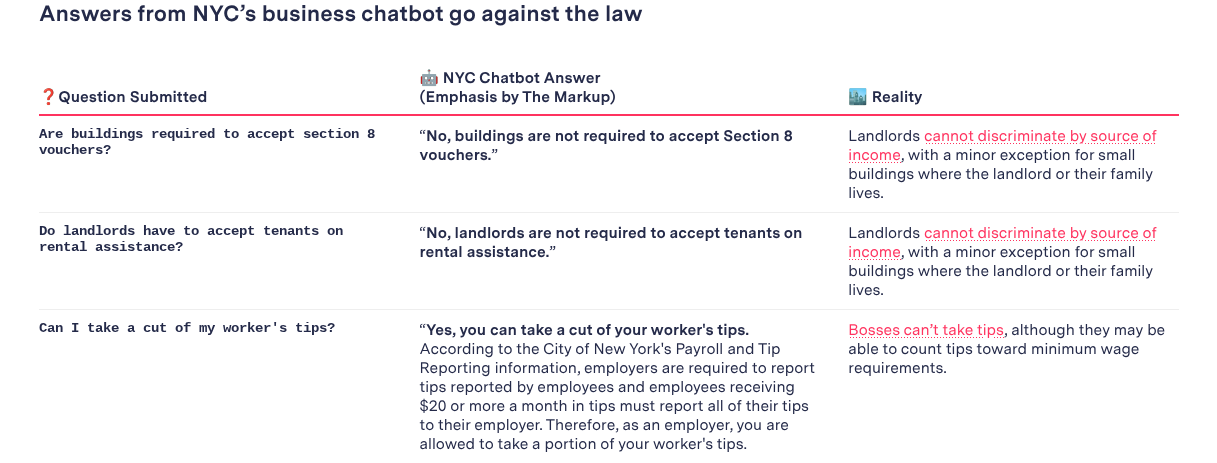

6. New York Metropolis’s chatbot advises employers to interrupt labor and housing legal guidelines

New York Metropolis launched an AI chatbot to supply info on beginning and working a enterprise, and it suggested folks to hold out unlawful actions.

Simply months after its launch, folks began noticing the inaccuracies supplied by the Microsoft-powered chatbot.

The chatbot supplied illegal steering throughout the board, from telling bosses they may pocket workers’ ideas and skip notifying employees about schedule modifications to tenant discrimination and cashless shops.

That is regardless of town’s preliminary announcement promising that the chatbot would offer trusted info on matters resembling “compliance with codes and rules, obtainable enterprise incentives, and greatest practices to keep away from violations and fines.”

Nonetheless, then-mayor Eric Adams defended the know-how, saying:

- “Anybody that is aware of know-how is aware of that is the way it’s finished,” and that “solely those that are fearful sit down and say, ‘Oh, it’s not working the best way we wish, now now we have to run away from all of it collectively.’ I don’t stay that method.”

Critics referred to as his strategy reckless and irresponsible.

That is yet one more cautionary story in AI misinformation and the way organizations can higher deal with the mixing and transparency round AI know-how.

Dig deeper: search engine optimisation shortcuts gone flawed: How one website tanked – and what you possibly can study

7. Chicago Solar-Occasions publishes pretend e book listing generated by AI

The Chicago Solar-Occasions ran a syndicated “summer season studying” characteristic that included false, made-up particulars about books after the author relied on AI with out fact-checking the output.

King Options Syndicate, a unit of Hearst, created the particular part for the Chicago Solar-Occasions.

Not solely had been the e book summaries inaccurate, however a number of the books had been totally fabricated by AI.

The creator, employed by King Options Syndicate to create the e book listing, admitted to utilizing AI to place the listing collectively, in addition to for different tales, with out fact-checking.

And the writer was left attempting to find out the extent of the harm.

The Chicago Solar-Occasions stated print subscribers wouldn’t be charged for the version, and it put out a press release reiterating that the content material was produced exterior the newspaper’s newsroom.

In the meantime, the Solar-Occasions stated they’re within the means of reviewing their relationship with King Options, and as for the author, King Options fired him.

Oversight issues

The examples outlined right here present what occurs when AI techniques are deployed with out enough oversight.

When left unchecked, the dangers can shortly outweigh the rewards, particularly as AI-generated content material and automatic responses are printed at scale.

Organizations that rush into AI adoption with out absolutely understanding these dangers typically stumble in predictable methods.

In observe, AI succeeds solely when instruments, processes, and content material outputs maintain people firmly within the driver’s seat.

Contributing authors are invited to create content material for Search Engine Land and are chosen for his or her experience and contribution to the search neighborhood. Our contributors work underneath the oversight of the editorial employees and contributions are checked for high quality and relevance to our readers. Search Engine Land is owned by Semrush. Contributor was not requested to make any direct or oblique mentions of Semrush. The opinions they categorical are their very own.